Human beings love to make predictions, whether about the movements of the stars, the gyrations of the stock market, or the upcoming season’s hot color. Pick up the newspaper or browse through headlines on any given day, and you’ll immediately encounter a mass of predictions — so many, in fact, that you probably don’t even notice them.

Track our experts’ shots at 2012 futurecasting

Keeping score

What’s a little puzzling about all these predictions, though, is that no one seems to know how accurate they are. Sure, when pundits get something right, they’re usually happy to take credit for it (“I predicted the financial crisis,” “I predicted Facebook would eclipse MySpace,” etc.). But what if they were forced to write down every prediction they ever made and were then held to account for the ones they got wrong as well? How accurate would they be?

In the mid-1980s, psychologist Philip Tetlock set out to answer exactly this question. In a remarkable experiment that unfolded over 20 years, he convinced 284 political experts to make nearly a 100 predictions each about a variety of possible future events, from the outcomes of specific elections to the likelihood of armed conflict between two nations. Tetlock insisted that experts specify at the outset which of two outcomes they expected for each prediction, and also to state explicitly how confident they were. Confident predictions would score more points than ambivalent ones if they were correct, but would also be penalized more if they were wrong.

Twenty years later, Tetlock finally tallied his results. Much to the consternation of the experts, their overall performance was only marginally better than random guessing, and not even as good as a relatively simple statistical model. Also surprising: The experts did better when operating outside their area of expertise than within it.

Tetlock’s study is unusual in its rigor, but the results are probably far more representative than most talking heads, newspaper columnists, industry analysts, and other professional prognosticators would have us believe. In cultural industries, for example, publishers, producers, and marketers have as much difficulty predicting which books, movies, and products will become the next big hit as Tetlock’s political experts had predicting world events. And whether we consider the most spectacular business meltdowns (Enron, WorldCom, Lehman Brothers) or spectacular success stories (Google), what is perhaps most striking is that virtually nobody seems to have had any idea what was about to happen.

Not bad at all predictions

Faced with this evidence, you might conclude that people are simply bad at making predictions. But that’s not right either. There are, in fact, all sorts of predictions we could make very well if we chose to. I would bet, for example, that I could do a pretty good job at forecasting the weather in Santa Fe — in fact, I bet I would be correct more than 80% of the time. That sounds impressive compared with Tetlock’s experts — until you realize that it is sunny in Santa Fe roughly 300 days per year, and therefore I can be correct 300 days out of 365 (an accuracy of 82%) simply by making the mindless prediction that tomorrow will be sunny. Likewise, predictions that the United States will not go to war with Canada next year, or that the sun will rise in the east tomorrow, are likely to be highly accurate but impress no one.

The real problem of prediction, in other words, is not that we are universally good or bad at it, but rather that we are bad at distinguishing predictions that we can make reliably from those that we can’t. And unfortunately, the predictions that we’re most enthusiastic about making are often the kind that we’re bad at.

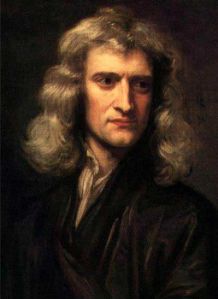

False confidence, thanks to Newton

In a way, the problem goes all the way back to the legendary physicist, Isaac Newton. Starting from his three laws of motion, along with his universal law of gravitation, Newton was able to predict not only the orbits of the planets, but also the timing of the tides, the trajectories of projectiles, and a truly astonishing array of other natural phenomena.

It was an amazing accomplishment, but it also had the effect of setting an expectation of what could be predicted that would prove difficult to match. As it turns out, very few phenomena in nature are as predictable as planets and tides. Yet Newton’s early success begat the false conclusion that everything worked the same way. Even Newton himself was susceptible to this misconception, famously wondering: “If only we could derive the other phenomena of nature from mechanical principles by the same reasoning!”

A century later, French mathematician and astronomer Pierre-Simon Laplace pushed Newton’s vision to its logical extreme, claiming in effect that Newtonian mechanics had reduced the prediction of the future – whether the fate of the universe or the outcome of the next military conflict — to a matter of mere computation. Laplace even went as far as to propose a hypothetical entity, since named Laplace’s Demon, for whom “nothing would be uncertain and the future just like the past would be present before its eyes.” People of course had been making predictions about the future since the beginnings of civilization, but what was different about Laplace’s boast was that it wasn’t based on any claim to magical powers or even special insight that he possessed himself. Rather, it depended only on the existence of scientific laws that in principle anyone could master. Thus prediction, once the realm of oracles and mystics, was brought within the objective, rational sphere of modern science.

Living in a complex world

In making this bold leap, however, Laplace obscured a critical difference between two different sorts of systems, which for the sake of argument I’ll call “simple” and “complex.” Simple systems are those for which a model can capture all or most of the variation in what we observe. The oscillations of pendulums and the orbits of satellites are therefore “simple” in this sense, even though it’s not necessarily a simple matter to be able to model and predict them.

Complex systems are another animal entirely. Nobody really agrees on what makes a complex system “complex,” but it’s generally accepted that complexity arises out of many interdependent components interacting in nonlinear ways. The U.S. economy, for example, is the product of the individual actions of millions of people, as well as hundreds of thousands of firms, thousands of government agencies, and countless other external and internal factors, ranging from the weather in Texas to interest rates in China.

Out of all these interactions arise two countervailing features of complex systems: on the one hand, tiny disturbances in one part of the system can at times get amplified to produce large effects somewhere else — reminiscent of the “butterfly effect” from chaos theory — while, on the other hand, very large shocks can at other times get absorbed with remarkable ease. And because it’s impossible to determine in advance which of these two fates any given shock will experience, it follows that the sorts of mechanistic models that work well for simple systems — and that enabled the likes of Newton and Laplace to make such accurate predictions — work poorly for complex systems.

All this helps explain why Tetlock’s experts, along with experts in marketing, business, government policy, and other areas of social and economic forecasting, have such a hard time making consistently accurate predictions: Almost invariably, they are making predictions about complex systems, and for complex systems it is no more possible to predict outcomes with certainty than it is to predict the outcome of a die roll.

Predicting what to predict

The randomness inherent to complex systems is one reason why the predictions that we make are often wrong, but there’s a second reason: Often we don’t know which predictions we should be trying to make in the first place.

Truth be told, there is an infinitude of predictions that we could make at any given time, but the only ones we care about are the very small number of predictions that — had we been able to make them correctly — would have actually mattered. Had U.S. aviation officials predicted that terrorists armed with box cutters would hijack planes and fly them into the World Trade Center and the Pentagon, they could have taken preventative measures, like strengthening cockpit doors and clamping down on airport screening, that would have averted the threat. Had an investor known in the late 1990s that the small startup company called Google would one day grow into an Internet behemoth, they could have made a fortune investing in it. These are the sorts of events that we would like to be able to predict, and when we look back in history, it seems like we ought to have been able to.

But what we don’t appreciate is that hindsight tells us more than the outcomes of the predictions that we could have made in the past. It also reveals what predictions we should have been making in the first place. How was one to know before 9/11 that the strength of cockpit doors, not the absence of bomb-sniffing dogs, was the key to preventing airplane hijackings? Or that hijacked aircraft, and not dirty bombs or nerve gas in the subway, were the main terrorist threat to the U.S.? How was one to know that search engines would make money from advertising and not some other business model? Or that one should even be interested in the monetization of search engines rather than content sites or e-commerce sites, or something else entirely?

Presumably some people did predict all these things, just as some people predicted the global financial crisis of 2008. But that doesn’t help us much either, because for every one of those people, there were plenty of others making the opposite prediction, or making predictions about other events entirely — events that either never took place, or turned out not to be that important. At the end of the day, making the right prediction is just as important as getting the prediction right, but it is only at the end of the day that know which prediction was the right prediction.

Some grounds for optimism

If this sounds hopeless, it is — but only if we aspire to a level of certainty about the future that is at odds with the fundamental randomness of the world. If we acknowledge that randomness, there are still useful predictions we can make, just as poker players who count cards can make useful predictions without ever knowing with 100% confidence which particular card is going to show up next.

Retailers like Wal-Mart, for example, can mine their shoppers’ behavior prior to hurricanes to learn that when the next hurricane is imminent, they need to stock up on Pop-Tarts. Google and Yahoo! have both shown that search queries can be used to track the spread of influenza — or to predict the box office revenues of movies. Credit card companies can predict when their customers are likely to default based on their payment histories, airlines have gotten remarkably good at pricing their seats so as to maximize their profit per seat while still filling their planes, and casinos have learned how to optimize their slot machine algorithms to keep gamblers playing for longer.

Some kinds of predictions, in other words, are possible, and once we learn to recognize them, there are many things we can do to improve our performance. But the flip side of this lesson is that some predictions are simply not possible, and that in these cases we need to develop approaches to planning that don’t depend upon making predictions that we can’t make.

For example, we probably can’t predict the timing and nature of the next financial crisis, the next political revolution, or the next transformational technology trend. So rather than wasting our breath pontificating, we should instead devote our energies to building systems that are robust to the unexpected, and making plans that can work under a variety of scenarios. Finally, we can change our approach to planning altogether, moving away from plans that revolve around predictions and instead embracing those that depend on being to react very quickly to what is happening in the present.

When it comes to predictions, in other words, there are no crystal balls and no silver bullets. But once we acknowledge the futility of Laplace’s dream — not to mention the pretensions of experts who think they can outsmart the law of unintended consequences — there are many ways to do better.

Tarot cards photo courtesy of Ian Crowthers, FlickR

This post is adapted from “Everything is Obvious*: Once You Know The Answer.”

Author Duncan Watts, a principal research scientist at Yahoo! Labs, started off academic life as a mathematician with a B.Sc. in physics and a Ph.D. in theoretical and applied mechanics before becoming a tenured professor of sociology at Columbia University. When not debunking pop sociology theories, Watts has served as an officer in the Royal Australian Navy, climbed the nose of El Capitan, and hula-hooped for New York Magazine.